Return to specs grading: Modern Algebra

This is second of two posts on my use of specifications grading as I return to teaching in Fall 2018. In the first post, I described specs grading in general and then went into detail on how it's used in one of my courses, a hybrid-format Calculus 1 class. Here, I'm going to discuss my other course: Modern Algebra 1. I'm teaching two sections of this course, and as you might guess, the grading system turned out quite differently than Calculus.

About the course

Modern Algebra 1 is an upper-level course intended for mathematics majors and minors, focusing on theory of integers and integer arithmetic, rings, fields, and polynomials. It is called "abstract algebra" in some places; unlike many college courses on this subject we start with rings first, and then the second semester of the course is focused on the theory of groups. The course has a standard face-to-face format, and we meet twice a week for 75 minutes each.

Who takes Modern Algebra? Mostly mathematics majors; of the 46 students total in both sections of the course, 39 are majoring in mathematics. Of those 39 students, 23 of them are pre-service elementary or secondary mathematics teachers. That's half the entire enrollment, and it's no surprise – this course is one of the upper-level required courses for students seeking teacher certification, and it's one of the reasons we do rings first in the course, to get the theory of the course as close as possible to the algebra that these students will be teaching in middle and high schools.

The course has two prerequisites: Communicating in Mathematics (our introduction to proof course) and Linear Algebra. The first of these is especially important because Modern Algebra is theoretical in nature and so there are a lot of proofs. It's also a source of student stress, since proof is hard, and students often do not feel at all comfortable with proof writing despite making good grades in that course. (I know this because several of those students have already told me so.)

A T-shaped experience

The course design for Modern Algebra diverged from the design for Calculus fairly quickly as soon as I started thinking about learning targets.

My first attempt at specifications grading was in Winter semester 2015 when I taught two courses: the second semester of Discrete Structures for Computer Science, and the second semester of this course, Modern Algebra 2. I wrote about the specs grading design part of those courses at the time. When I designed Modern Algebra 2, I did the usual thing: I wrote out specific learning targets for which students will accumulate work that shows they have hit those targets. Here's the list of targets I came up with for the course.

Do not adjust your set: That is a total of 67 learning targets for the course. Of those, 30 are "concept check" objectives targeting foundational knowledge like stating definitions and theorem statements; the remaining 37 are "module" objectives that target higher-level tasks. And of the 37 module objectives, 24 are "core" module objectives that have a special place in the grading system, which you can read (if you dare) in the syllabus for the course.

Needless to say, this design concept is to be filed under "it seemed like a good idea at the time". I was staying true to the spirit of specs grading, I thought, but in practice the system became wildly overcomplicated. Just the very idea of keeping 67 Learning Targets straight in your mind, differentiated into CC and M and CORE-M objectives, is enough extraneous cognitive load to put off even some of the best learners. But the worst thing about having so many objectives was that by breaking down the course into so many atomic-level objectives and assessing each one individually, students lost the big picture of the course . The course itself, which contains some of the most beautiful parts of mathematics, became dissociated as students focused almost entirely on the pieces I'd created and not on the whole, on how all those pieces connect.

This time around, I thought about my experiences in 2015 and decided that:

- I want students to become very fluent on the foundational knowledge of the subject: Definitions, theorem statements, basic computations.

- But I also want them to become skilled at connections between the pieces of foundational knowledge: Applying the basics to new situations, doing nontrivial computations, making their own examples of structures, drawing conclusions using theorems, making conjectures, proving and analyzing written proofs of conjectures.

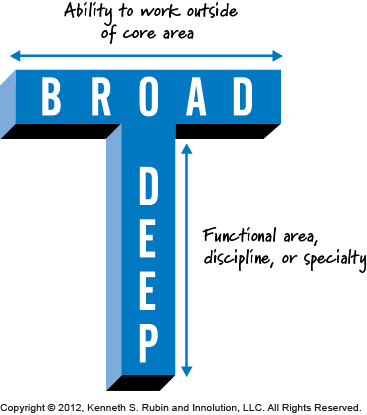

Thinking about this orthogonal relationship between foundations and connections brought to mind some discussions I had while on sabbatical at Steelcase last year about "T-shaped skills". Our discussions at Steelcase focused on the kind of educational experiences that learners need for the modern workplace, and how learning space design can support pedagogy that focuses on this.

I realized that this was also the idea I was trying, not very successfully, to capture with my specs grading setup in 2015. I want students to have deep functional knowledge of algebra, and the ability to connect that knowledge into a coherent whole both within the course and to application areas outside the course – including and especially, to teaching middle and high school mathematics. So this became the focus of the course design.

What students do, and what I do, in the course

To capture this T-shaped idea, I designed the course so that the vast majority of our class meetings will be spent working on the early stages of connections: Taking foundational knowledge and finding relationships among the parts, including parts we'd previously studied and parts outside the course itself (again, especially any place where course concepts have something to say about middle- and high school math instruction). To make as much time for this as possible, the course is design using flipped learning, and we learn most of the foundational knowledge – definitions, theorem statements, basic computation, etc. – before class through structured inquiry assignments. Then we extend both the foundations and the early connections after class through creative work, particularly working on proof-oriented problems.

In particular, an ideal lesson design will get learners engaged in the mathematical process of experimentation, conjecture, and proof like so:

- Students learn the foundations through pre-class activities and experiment with concepts, and bring in the results of their experiments to class.

- Then in class, we make connections by looking at their work and make conjectures about what we are all seeing, then work on proving those conjectures together.

And my role in all this is to design the learning activities, manage questions, keep groups in class functioning well, and collect data on student comprehension as well as coaching on any creative work students might do, such as drafting proofs.

How this translates into assessment

To structure this kind of experience, we've set up the following categories of assignments:

- Guided Inquiry assignments to structure the students' pre-class experiences of learning the foundational knowledge. Here's the first one. It doesn't quite have the "experimentation" flavor to it I described because we are just getting started, but it gives the idea. It's basically a wrapper around a Google Form. The wrapper gives the overview, learning objectives, and resources while the form contains the exercises. These are graded Satisfactory or Unsatisfactory on the basis of completeness and effort ("Satisfactory" is awarded for giving good-faith responses to each exercise and turning the form in on time.)

- Foundations quizzes will assess fluency with foundational knowledge. Being fluent with definitions of terms, notation, etc. is critically important in algebra. These are graded Satisfactory or Progressing. Here's sample of the first Foundations quiz which will be given in a week or so; and here's a Google doc I am using to list the topics on each quiz and the criteria for "Satisfactory" for each one.

- Connections tests are timed assessments that get at higher-level learning tasks such as using theorems to draw conclusions given some situational data; doing more advanced computation; and outlining or analyzing proofs. These tests are broken into 3-6 sections each of which targets a specific idea. Then the tests are given using a two-stage concept: For the first 45 minutes, students work on their tests individually. Then their work is collected, students are put into groups of 3--4, and a new version of the test consisting of a subset of problems from the individual stage (suitably modified for time) is given and done in small groups. Each section is graded Excellent, Satisfactory, Progressing, or Incomplete. Students get to keep the higher of the two grades from the individual and group stage on each section. Then the grade on the test is Excellent, Satisfactory, or Progressing depending on the grades on the sections.

- Finally there is a Proof Portfolio students do, involving solving proof-related problems from different groups of topics.

We will also have a final exam in the course, and students earn experience points (XP) for completing tasks that signify engagement in the course (attendance, filling out surveys, etc.).

How the grading system works

Here is the final syllabus for the course, which contains all the details about how the grades on individual items work. The Appendix in particular shows the "specs" for each individual form of work in the course.

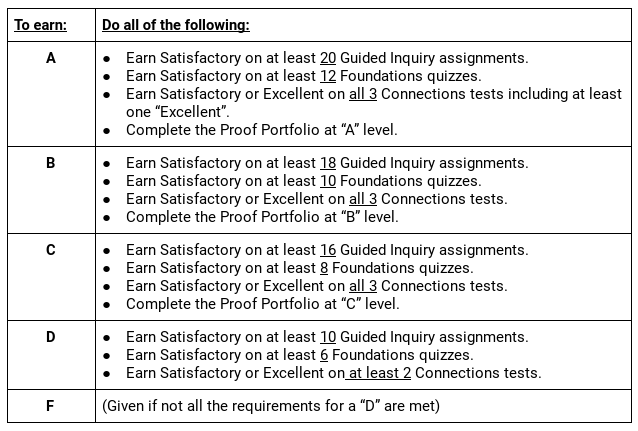

As with calculus, finding the course grade is a two-step process. First, the base grade is determined, which is just the A/B/C/D/F grade without plusses or minuses:

The current calendar has us doing 23 Guided Inquiry assignments and 13 Foundations quizzes. So to earn a "C", a.k.a. baseline competency, you would have to satisfactorily complete 70% of the Guided Inquiry, 60% of the Foundations, all of the Connections, and do what I defined as "C-level work" on the proofs. The thought here is that a "C" indicates reasonably OK performance on the higher levels and minimally acceptable performance on the lower levels. To earn higher grades, students have to do more, and do it better.

One big difference between this table and the one for Calculus is that there are no learning targets here. I started to write out Learning Targets for this course but quickly felt like it was Winter 2015 again, and the course was losing its coherence because of the focus on micro-sized learning objectives. The closest we come to having Learning Targets are the topic lists for Foundations quizzes, and there I simply sample from the list for individual quizzes rather than try to test everything.

So this makes my system a lot more like specifications grading and less like standards-based grading. I'm sometimes asked to explain the difference between these two forms of mastery grading and now I think I know the answer. It was recently put very succinctly by my friend Josh Bowman in a talk, in which he said "Standards reflect content, specifications reflect activity". In Calculus, the focus is on content (which for me includes both procedural and conceptual knowledge) In Modern Algebra, and I think in many other upper-division math courses, the focus is on broad areas of skill, again especially the dual categories of foundations and connections, not so much on individual pieces of content, which are important but not the point.

In Modern Algebra there's a more complex system for determining plus and minus modifiers once the base grade has been determined. The final exam and XP are the primary factors in finding the modifier, similarly to what I did in Calculus. But I also include some "near miss" situations; for example if you complete all the requirements for a base grade plus either the Connections or Proof Portfolio requirements for the next grade up, that gives you a "plus". The details are in the syllabus.

Finally, as with Calculus, there's a very robust system of revision and reassessment:

- Foundations quizzes can be retaken during two designated 75-minute sessions during the semester and at the final exam.

- Connections tests can be retaken once through a take-home process. Part of the rationale behind using a two-stage setup here was that it sort of provides an automatic revision, in the group stage. So in some sense students get two revisions: Once in their groups, and again through take-home.

- Proof Portfolio problems can be resubmitted as often as needed, with the restriction that no more than two proof submissions total (two new submissions, two revisions, or one of each) can be made in any given week. Students can spend a token to get a third submission. Also, each group of proof problems has a deadline, and problems whose initial submission comes after the deadline are accepted but may only be revised once. In my Discrete Structures course I only had one final deadline, but I found students were waiting until week 8 to submit proofs from work done in week 2, so hopefully the incentive of "unlimited" revisions for work submitted by a deadline will mitigate that.

What I like/don't like/am not sure about

The main thing I like about this setup is that I think it will help students get more comfortable with writing proofs, particularly the role of failure in writing proofs. Most proofs are not correct the first time and need iteration. Many students still struggle, even though they are seasoned veterans in this course, with coping with turning in work that needs significant revision. A lot of them see writing a proof as if it were defusing a bomb, where everything has to fit together just so, according to a system of rules for logic and grammar that they don't fully understand, or else the thing explodes and takes them with it. I want them to realize that writing a proof is like writing anything else – it's a process of reflection and iteration, and they should be unafraid to engage in it.

I also like that this system seems to provide equal footing for both parts of the "T" that I am aiming for: both foundations and connections, and space to make mistakes and correct oneself on each. Also, I like the two-stage testing idea – I have never used it before but a 75-minute class is the perfect environment for it.

As with Calculus and other classes in which I've used specs grading, I dislike how complicated this all is. It could certainly be simplified, but it would come at the expense of accuracy and fidelity to students' learning. Finding the right sweet spot between complexity and accuracy is very difficult.

Things I am not sure about yet:

- This class always gets me worried about academic dishonesty. It's so hard to come up with somewhat original proof problems, and so easy to search them up online. I hope that the revision process disincentivizes cheating – why plagiarize when you can just revise? – but I am sure academic dishonesty will come up at least once. I just hope it's not an epidemic.

- I'm wondering if the group stage of the Connections tests are going to be overwhelming for students who are introverts or have different neurological needs. Or just whether it will be too loud to think.

- There's considerably more timed assessment in the class than I used to have in an upper-level course and I'm wondering if this is going to be a problem, e.g. every time there's a Foundations quiz or Connections test there will be some student who misses, and I have to turn into a lawyer to determine if the absence is legitimate or not and how to handle it.

- Finally, I am not sure what the unknown unknowns are. What should I be thinking about that I am currently not thinking about?

So that's the state of my mastery grading systems in Fall 2018. I'd be curious to know your thoughts, nitpicks, suggestions, and so on for any of this – just air them out on Twitter and @-reply me (@RobertTalbert) or email me to let me know.